← Back to All Work

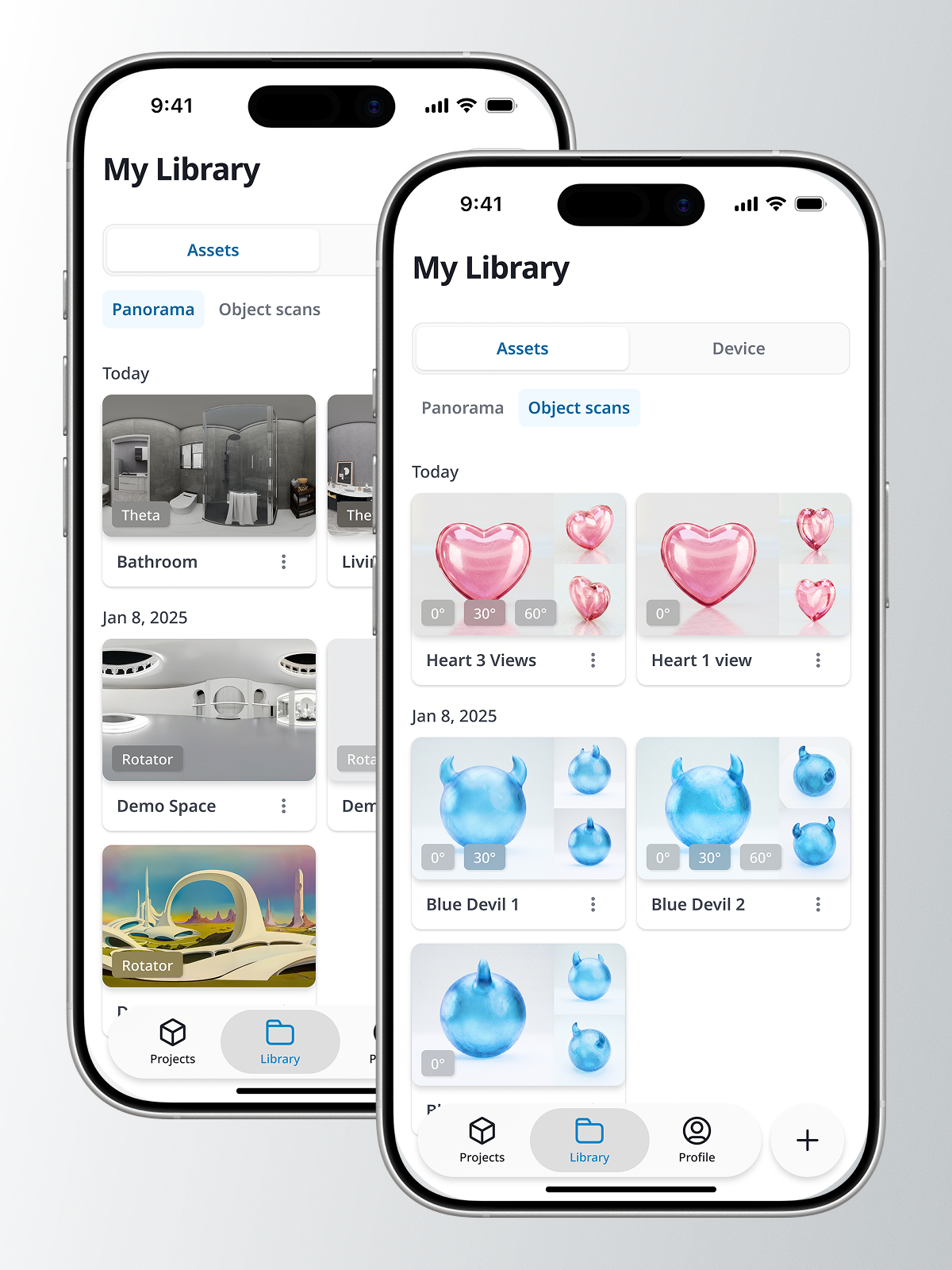

iStaging ONE — Unified 3D Capture App

One app that captures panoramas, scans 3D objects, and manages immersive content across three product ecosystems.

Role

Design Lead

Cross-product UX strategy, capture workflow design, interaction design

Duration

6+ months (ongoing)

Tools

Figma, After Effects, Jira

Team

Web Tsai

Platform Director

Jafee Cho

Product Manager

Jerry Liao

Technical Manager

Eason Yang

App Developer

Jessie Hu

Back-End Developer

Product by

Press & Media

Cyberbiz App Market